I have written this essay with AI — below are not my words… This below blog is just an template for demonstrating components of my website. I will write this blog in my words and thoughts someday when I get to it!

The Cave We Carry in Our Pockets

Twenty-four centuries ago, Plato described prisoners chained in a cave, mistaking shadows on the wall for reality. They had never seen the fire behind them, let alone the sunlight outside. The allegory was a thought experiment about ignorance, education, and the painful process of coming to know what is real.

Today, we carry the cave in our pockets. The shadows are algorithmic — curated, optimized, personalized. And the chains? They are made of convenience.

The truth would be literally nothing but the shadows of the images.

The Structure of the Allegory

For those unfamiliar, the allegory unfolds in stages. Each stage maps neatly onto a mode of understanding — and, I will argue, onto a mode of digital engagement.

Chained since birth, they see only shadows on the wall and hear only echoes. They believe this is all there is.

One prisoner is freed and turns to see the fire — the source of the shadows. The light is blinding, the truth disorienting.

The freed prisoner climbs out of the cave into the sunlight. Gradually, they perceive the world as it truly is.

The philosopher returns to the cave to free the others. But the prisoners, comfortable in their chains, resist — even violently.

Shadows on the Feed

Consider the average social media feed. It presents a world — news, opinions, images — that feels comprehensive but is radically incomplete. The algorithm decides which shadows you see. Like Plato’s fire operators who manipulate the objects casting shadows on the wall, content algorithms shape what we take to be “the world.”

In Plato’s cave, someone controlled the objects that cast shadows. On social media, that role is played by recommendation algorithms. The key difference: the algorithm doesn’t need intent to deceive. It simply optimizes for engagement, and deception emerges as a side effect.

The parallel is not perfect — it never is with allegories — but the structural similarity is striking. We are not forced to look at the feed. But the design of these platforms exploits cognitive biases so effectively that the voluntariness of our attention is questionable at best.

How Deep Do the Chains Go?

Let’s try to measure our entanglement. How much of our information diet is algorithmically mediated?

These numbers are approximate (based on Reuters Digital News Report 2024), but the pattern is clear: the vast majority of what we “know” about the world arrives through algorithmic curation. We are, in a meaningful sense, watching shadows.

Two Readings of the Return

The most poignant moment in Plato’s allegory is the return — when the philosopher goes back into the cave to liberate the others. The prisoners mock and threaten the returning philosopher. They don’t want to be freed.

There are two ways to read this in the modern context:

Education and media literacy can play the role of the philosopher. By teaching critical thinking and digital literacy, we gradually help people “turn around” and see the algorithmic fire for what it is.

The cave has become too comfortable. Information overload has made people not just ignorant of truth, but actively hostile to it. The philosopher who returns is labeled a conspiracy theorist or an elitist.

Both readings have merit. I tend to lean toward a middle path: liberation is possible, but it requires institutional support, not just individual virtue.

The greatest danger is not misinformation — it is the erosion of the shared epistemological framework that makes truth-seeking possible at all.

Heidegger’s Disclosure and the Feed

Heidegger’s concept of Erschlossenheit (disclosure) offers a useful complement to Plato here. For Heidegger, we don’t simply “discover” a pre-existing truth; rather, truth is disclosed to us through our mode of being-in-the-world.

The algorithm doesn’t just filter truth — it constitutes a mode of disclosure. It shapes the horizon within which things can appear as meaningful at all. When the algorithm decides what is “relevant,” it is not merely hiding some truths while showing others. It is defining the space of possible truths.

The essence of truth is freedom.

If Heidegger is right, then algorithmic curation is not merely an epistemic problem (we believe false things) but an ontological one (the very structure of our world-disclosure is compromised).

Byung-Chul Han and the Transparency Society

The Korean-German philosopher Byung-Chul Han pushes this further. In The Transparency Society, he argues that the demand for total transparency — for everything to be visible, shareable, quantifiable — paradoxically destroys meaning.

Han claims that the age of transparency produces not enlightenment but pornography — i.e., the exposure of everything to view, stripped of all mystery, all depth, all hermeneutic resistance. Shadows, he implies, are not always the enemy. Sometimes meaning requires concealment, ambiguity, withholding. The cave, in a certain light, was at least a space for interpretation.

This is a genuinely unsettling idea. If the shadows in the cave at least provoked interpretation and wonder, then perhaps the flat, over-lit world of algorithmic transparency is worse — not because it shows less, but because it leaves nothing to think about.

Plato’s Allegory Meets Plato’s Dialogue

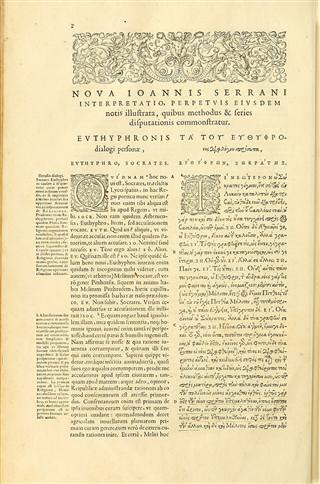

It is worth connecting this to the broader Platonic project. In the Euthyphro, Socrates demonstrates that even confident people — even those about to prosecute their own father — cannot give a coherent account of the concepts they rely on. Euthyphro “knows” what piety is until Socrates starts asking questions.

Plato

9 /10Euthyphro

Plato

399 BC

Social media rewards the Euthyphros of the world: confident, quick, assertive. The Socratic mode — slow, questioning, willing to admit ignorance — is algorithmically disadvantaged. Nuance doesn’t go viral.

So What Do We Do?

I don’t have a neat answer. But I think the allegory suggests a few things:

The allegory of the cave endures not because it gives us answers but because it keeps asking the right question: How do you know that what you think you know is real?

In the age of feeds and filters, that question has never been more urgent.